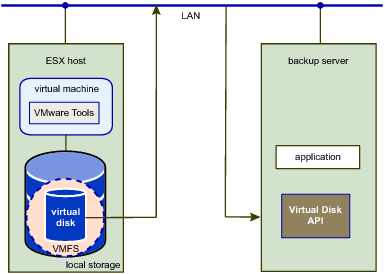

The virtual disk library can read virtual disk data from /vmfs/volumes on ESXi hosts, or from the local file system on hosted products. This file access method is built into VixDiskLib, so it is always available on local storage. However it is not a network transport method, and is seldom used for vSphere backup.

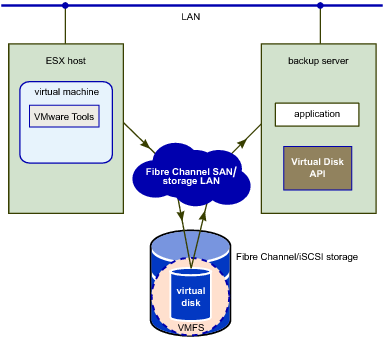

SAN mode requires applications to run on a backup server with access to SAN storage (Fibre Channel, iSCSI, or SAS connected) containing the virtual disks to be accessed. As shown in SAN Transport Mode for Virtual Disk, this method is efficient because no data needs to be transferred through the production ESXi host. A SAN backup proxy must be a physical machine. If it has optical media or tape drive connected, backups can be made entirely LAN-free.

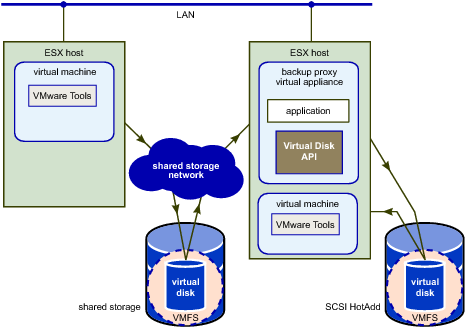

HotAdd is a good way to get virtual disk data from a virtual machine to a backup appliance (or backup proxy) for sending to the media server. The attached HotAdd disk is shown in HotAdd Transport Mode for Virtual Disk.

If the HotAdd proxy is a virtual machine that resides on a VMFS-3 volume, choose a volume with block size appropriate for the maximum virtual disk size of virtual machines that customers want to back up, as shown in VMFS-3 Block Size for HotAdd Backup Proxy. This caveat does not apply to VMFS-5 volumes, which always have 1MB file block size.

As of vSphere 6.5, NBDSSL performance can be significantly improved using data compression. Three types of compression are available – zlib, fastlz, and skipz – specified as flags when opening virtual disks with the VixDiskLib_Open() call. See Open a Local or Remote Disk.

NBDSSL employs the network file copy (NFC) protocol. NFC Session Connection Limits shows limits on the number of connections for various host types. These are host limits, not per process limits. Additionally vCenter Server imposes a limit of 52 connections. VixDiskLib_Open() uses one connection for every virtual disk that it accesses on an ESXi host. Clone with VixDiskLib_Clone() also requires a connection. It is not possible to share a connection across physical disks. These NFC session limits do not apply to SAN or HotAdd transport.

On Windows VDDK 5.1 and 5.5 required the VerifySSLCertificates and InstallPath registry keys under HKEY_LOCAL_MACHINE\SOFTWARE to check SSL certificates. On Linux VDDK 5.1 and 5.5 required adding a line to the VixDiskLib_InitEx configuration file to set linuxSSL.verifyCertificates = 1.

The following library functions enforce SSL certificate checking: InitEx, PrepareForAccess, EndAccess, GetNfcTicket, and the GetRpcConnection interface that is used by all advanced transports. SSL verification may use thumbprints to check if two certificates are the same. The vSphere thumbprint is a cryptographic hash of a certificate obtained from a trusted source such as vCenter Server, and passed in the SSLVerifyParam structure of the NFC ticket.